Tensorflow image resize4/13/2023

record files that are relevant for your purposes. I urge you to follow it, but in case you have a valid reason to avoid one of these sets, then prepare only those. The traditional approach to machine learning needs 3 separate sets: for training, evaluation and testing. test.record is needed to check end-model performance when it’s already been trained.validation.record is needed to evaluate your model during training.record files in your data folder but still want to do the evaluation, here is something to consider: ├─ test.record # dataset that we’ll use to test our model ├─ validation.record # dataset that we’ll use for model validation ├─ train.record # dataset that was used to train our model If your data folder has the same number of files as below, then you’re all set to proceed to the next step! Tensorflow/ If you carefully followed the instructions in the first article, then dataset preparation should sound familiar.Īs a reminder, we prepared two files (validation.record and test.record) needed for model evaluation and placed them into Tensorflow/workspace/data. Take your time to choose the option you want for tracking your particular model. There are some other options available in the TensorFlow API. Your choice for the set of metrics is not limited to precision and recall.

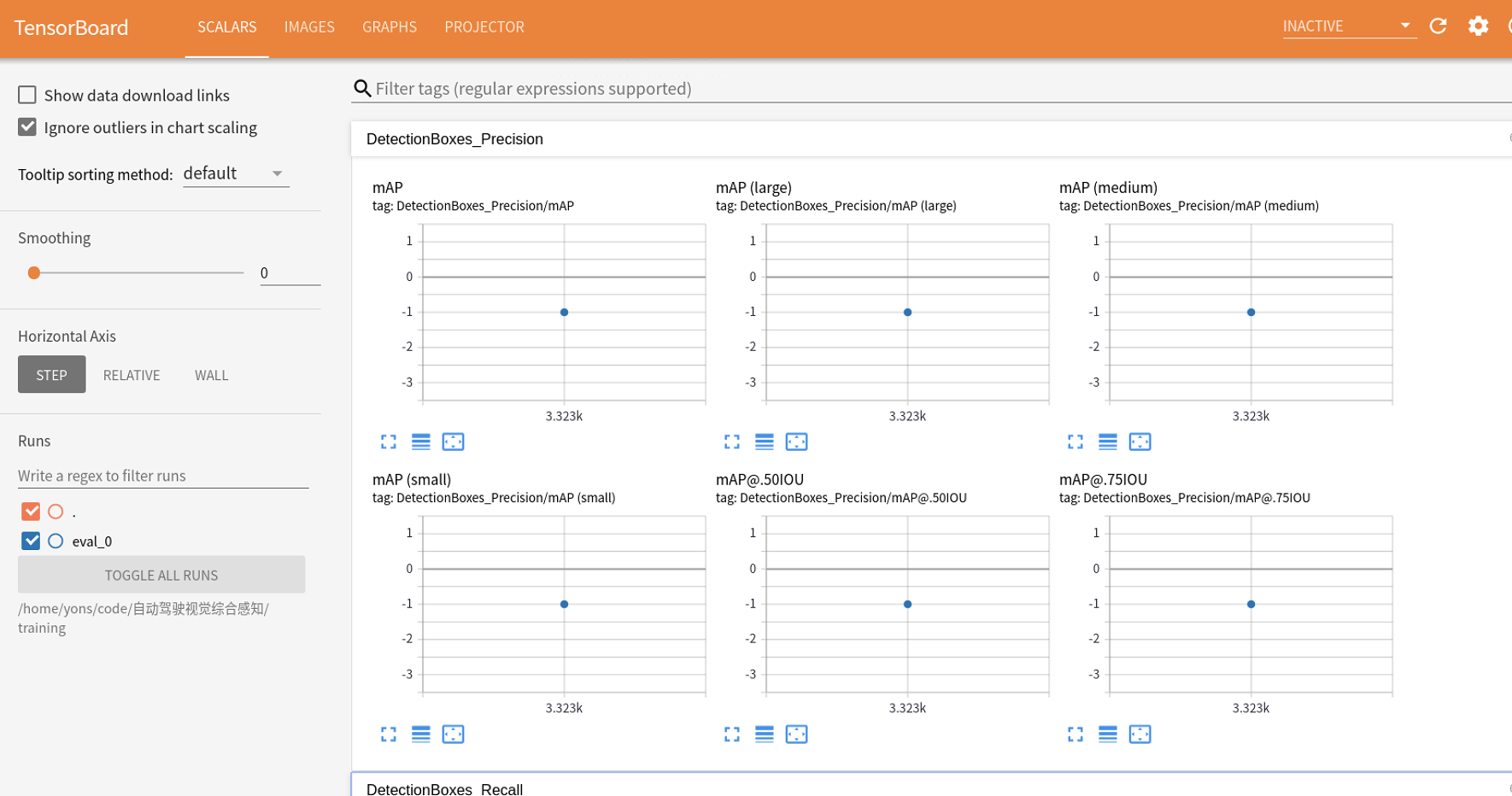

Average recall is not shown but also becomes available.

Note that mAP is calculated for different IOU values. After this step the tensor is now prepared for undergoing prediction operations.Mean average precision (mAP) shown as a plot after we enable it for model validation. This adds a dimension to highest level of the tensor, giving it dimensions (1, 299, 299, 1). img_final = tf.expand_dims(img_resized, 0) Another dimension must be added to the tensor prior to feeding into the prediction algorithm. img_resized = tf.image.resize_with_pad(img_gray, 299, 299)Īt this point our tensor dimension is (299, 299, 1). If larger, the resize will shrink the image accordingly. If the image is smaller than 299×299 the pad will add “0”s to the additional pixels. Now the image must be resized to 299×299. img_gray = tf.image.rgb_to_grayscale(img_tensor) The Inception v4 model that I am using requires a single-channel image, which is true gray-scale. Such an image is still color, in spite of its visual appearance. Some PIL and OpenCV routines will output a gray-scale image, but still retain 3 channels in the image, leaving the image appearing gray but unflattened. Now the image can be converted to gray-scale using the TensorFlow API. img_tensor = tf.convert_to_tensor(img_rgb, dtype=tf.float32) Once this is complete, the image can be placed into a TensorFlow tensor. Img_rgb = cv2.cvtColor(img_bgr, cv2.COLOR_BGR2RGB) img_bgr = cv2.imread(filename='/path/to/file') OpenCV uses BGR format, therefore the original image must read and converted to RGB. This post is a very short post with a few lines of Python code. The dimension (299, 299, 3) is a color image, while (299, 299, 1) is in gray scale.

A problem that I needed to solve today at work was how to import a single color image in OpenCV format with variable X-, Y-size dimensions into a TensorFlow Inception V4 model with dimensions (x, 299, 299, 1) for prediction.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed